SDN DATAPATH TEST

Software-Defined Networking (SDN) has been the future of network architectures for a few years, promising unmatched infrastructure scalability, supporting improved flexibility of management, ensuring vendor independence, and boosting the design of novel network services. Now that it is the present, it continuously feeds multiple research areas with unprecedented challenges and it is adopted by almost all device vendors. Despite the fervent activity in the scientific community on devising novel network architectures and services that take advantage of SDN, most papers validate their proposals on ad-hoc testbeds, and little attention has been devoted to determining the practical applicability of these approaches using currently available devices. On the other hand, even if OpenFlow is now somewhat mature, vendors seem to lag behind in terms of functionalities supported on their devices. In this project we want to define a methodology for testing the readiness of a device to operate in an SDN-based infrastructure, combining existing OpenFlow conformance test tools with other custom tests. Moreover, we want to draw a picture of the current status of OpenFlow implementations by applying our methodology to commercially available devices.

Test Description

On this page our goal is to make known and reapplied the testing methodology we used. In particular, this consists of the Ryu test and other custom tests that we have designed to prove the validity of the equipments. Finally there are also some results of the tests that we have conducted so far on some equipment that we had available.Ryu Test

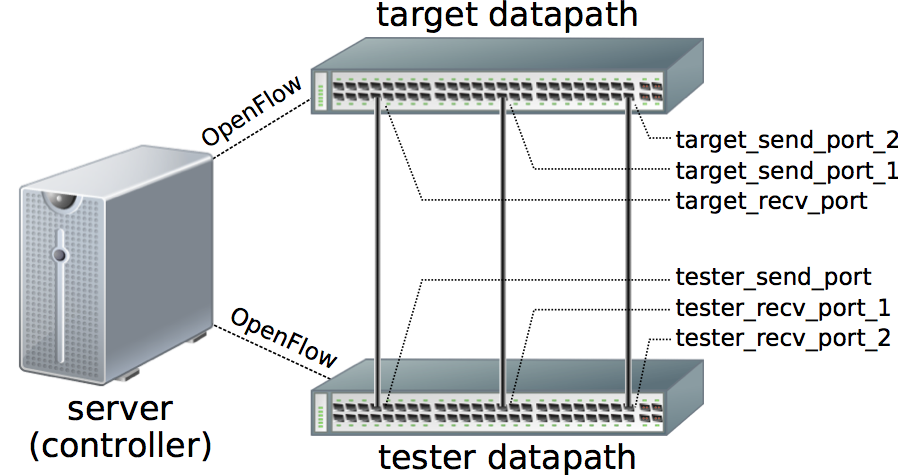

We used the Openflow switch test tool included in the Ryu controller framework. During internal communications, some vendors confirmed that they also use Ryu to check the compliance of their devices. We used version 3.18 of Ryu, which includes as many as 991 different test cases that span the OpenFlow 1.3 specification. Running the Ryu test suite involves 3 different devices connected according to the topology in the figure: a target datapath, namely the switch that is currently subject to the Ryu test; a tester datapath, namely a separate OpenFlow switch that is used to generate test packets to be sent to the target datapath, and to recollect any packets forwarded by the target datapath; and a server machine, where the Ryu test suite is being executed. While the test is running, Ryu acts as an OpenFlow controller connected to both the target and the tester switch. The typical actions performed to execute a test case are as follows:

- Ryu sends an OFPT_FLOW_MOD message to the target datapath, to install a flow entry that matches packets entering a specific port (target_recv_port in figure) and with specific headers. This flow entry usually applies a single action, which is to forward matching packets out of a target send port 1. In addition, it may apply manipulations to packet headers (e.g., push/pop of VLAN tags, TTL alteration, etc.). Moreover, Ryu also sends an OFPT_FLOW_MOD message to the tester datapath, to install a flow entry that matches packets entering port tester_recv_port 1 and sends them to the controller.

- Ryu sends an OFPT_PACKET_OUT message to the tester datapath, soliciting it to produce a test packet to be sent to the target datapath.

- The tester datapath sends the requested test packet out of its tester send port to the target datapath.

- The flow entry on the target datapath is matched, and the packet is forwarded out of target send port 1.

- The tester datapath receives the test packet through tester_recv_port 1. The flow entry installed at step 1 matches the packet, and the tester datapath sends an OFPT_PACKET_IN message to Ryu, enclosing the test packet.

- Ryu checks whether the headers and contents of the received (and, possibly, altered) copy of the test packet coincide with the expected ones, and reports the outcome of the test case accordingly.

- [action] – Tests in this class install on the target datapath flow entries that perform simple packet forwarding and various types of packet modification (e.g., TTL alteration, push/pop of VLAN and MPLS headers).

- [group] – For these tests Ryu installs on the target datapath flow entries that forward a copy of the test packet out of multiple ports, thus testing support for group actions; this test class requires the additional target send port 2 on the target datapath and the corresponding tester_recv_port 2 on the tester datapath.

- [match] – The flow entries installed on the target datapath to perform these tests consider an extensive assortment of match conditions applied to various header fields, exploit multiple flow tables, apply bitmasks on the matched fields, and alternatively forward packets out of a port or directly to the controller.

- [meter] – To perform tests in this class, the meter table of the target datapath is suitably populated, then Ryu emits packets from the tester datapath at a configured rate and checks if the target datapath withstands a certain packet transfer throughput.

Custom Tests

The Ryu test suite is more than enough to determine the OpenFlow features supported by a datapath. However, we believe a few additional tests are required to construct a more complete picture of the readiness of a device to operate in an SDN-based network architecture. We defined a set of custom tests, which are listed in the table below, that we executed both to verify additional datapath features required to exploit the full potential of SDN, and to investigate deeper in the causes of failures reported by the Ryu test.

Custom Tests Table

| Test codename | Test type (performance, functionality, controller, scenario, etc.) |

Test description and goals | Prerequisites and scenario (e.g., topology) | Expected outcome | |

|---|---|---|---|---|---|

| SB_PERFORMANCE | Performance | Verify the switching performance of the device under heavy traffic load generated by a Spirent SmartBits SMB-600B device with Gigabit Ethernet ports | Two ports of the DUT are connected to a traffic generator. Flow entries have been installed on the DUT to

direct traffic from one port to the other. Flow tables are empty execpt for the above mentioned flow entries. |

Assessment of the forwarding performance | |

| RYU_TEST_1.3 | Functionality | Run the complete OpenFlow 1.3 compliance suite available in Ryu | Two OpenFlow-capable devices connected as in http://tocai.dia.uniroma3.it/compunet-wiki/index.php/Installing_and_setting_up_OpenFlow_tools#Ryu_test_tool | Log of supported/unsupported features | |

| OF_STD_NORMALPORT | Functionality | Check whether OF rules that process packets using the standard TCP/IP stack can be installed (action: NORMAL) | None | The NORMAL action is supported | |

| OF_GROUP_ACTION_BEHAVIOR | Functionality | Check the behavior of group action that contains multiple outport actions | None | A copy of the packet is sent on each port specified in the group action list | |

| OF_STD_MASKABLE_FIELDS | Functionality | Check which header fields can be matched using bit masks | None | At least source/destination MACs and IPs (both IPv4 and IPv6) are maskable; possibly the metadata | |

| OF_STD_VLAN_LABELS | Functionality | Check whether VLAN header can be pushed/popped on packets. | None | Each action can push/pop multiple MPLS labels | |

| OF_STD_VLAN_MULTI_LABELS | Functionality | Check whether a single action can push/pop multiple tags | None | Each action can push/pop multiple MPLS labels | |

| DP_VLAN_STACK_SIZE | Functionality | Check the maximum number of VLAN tags that can be pushed on (and popped from) a packet in transit | None | The datapath supports handling of at least 3 MPLS labels | |

| OF_STD_MPLS_LABELS | Functionality | Check whether MPLS labels can be pushed/popped on packets. |

None | Each action can push/pop multiple MPLS labels | |

| OF_STD_MPLS_MULTI_LABELS | Functionality | Check whether a single action can push/pop multiple labels | None | Each action can push/pop multiple MPLS labels | |

| DP_MPLS_STACK_SIZE | Functionality | Check the maximum number of MPLS labels that can be pushed on (and popped from) a packet in transit | None | The datapath supports handling of at least 3 MPLS labels | |

| DP_RECIRCULATION | Functionality | Check the ability of the datapath implementation to recirculate packets (i.e., match on different protocol headers once outermost headers have been stripped) | None | Recirculation is fully supported, even when multiple headers (e.g., MPLS labels) are stripped | |

| DP_HARD_RECIRCULATION | Functionality | Force recirculation creating a physical loop on device | Standard recirculation does not work | Recirculation is fully supported, even when multiple headers (e.g., MPLS labels) are stripped | |

| STP_BLOCKED_PORTS | Functionality | Check whether ports that have been put in the blocked state by an STP can still forward packets when used as output ports by OF rules | STP support | The ability to forward packets using OF is independent of the STP port status | |

| RP_BLOCKED_PORTS | Functionality | Check whether ports that have been put in the blocked state by a Ring Protection can still forward packets when used as output ports by OF rules | Ring Protection support | The ability to forward packets using OF is independent of the STP port status | |

| DP_AGGREGATED_PORTS | Functionality | Check if frames can be forwarded into an aggregated link logical port | Link Aggregation support | ||

| DP_MCLAG_OPENFLOW | Functionality | Check if an OpenFlow datapath instance can be spread over the switches involved in an MC-LAG group | Multi-Chassis Link Aggregation support | ||

| DP_MCLAG_AGGREGATED_PORTS | Functionality | Check if frames can be forwarded into aggregated ports spanning two physical switches | MCLAG_OPENFLOW test success | ||

| DP_EMERGENCY_FLOWS | Functionality | Check whether emergency flow rules are kept active when controller reachability is restored, or if previously defined normal flow rules are re-activated instead | None | ||

| CTRL_INBAND_L2 | Scenario | Set up a scenario with an in-band controller, involving hosts in the same IP subnet | Switches contact the controller using the operational network. OF rules can be set up as described in the document linked by this cell | In-band control can be successfully accomplished | |

| CTRL_INBAND_L3 | Scenario | Set up a scenario with an in-band controller, involving hosts in different IP subnets | Switches contact the controller using the operational network. OF rules can be set up as described in the document linked by this cell | In-band control can be successfully accomplished | |

| DP_HIDDEN_FLOW_TABLES | Functionality | Check whether the datapath maintains hidden (i.e., "datapath" or "kernel") flow entries that can influence its behavior | None | None in particular | |

| DP_PERFORMANCE | Performance | Verify the switching performance of the device under heavy traffic load | Known traffic patterns are sent through the device, where a known (and minimal) set of OF entries is

installed to relay them to an output port. Possibly, multiple input and/or output(?) ports can be used. |

Assessment of the forwarding performance | |

| DP_PERFORMANCE_TABLESIZE | Performance | Verify the switching performance of the device under heavy traffic load when flow tables of different sizes are used | Known traffic patterns are sent through the device, and an increasingly higher number of flow entries

are installed. Possibly, multiple input and/or output(?) ports can be used. |

Assessment of the forwarding performance | |

| OF_STD_METADATA | Functionality | Check whether the datapath supports flow entries that manipulate or access metadata | None | Metadata are supported | |

| CTRL_OPERATION | Controller | Check the protocol used for the operation of the vendor-supplied controller | Vendor supplies controller | The controller successfully works and basic operations (e.g., topology reconstruction) can be performed without writing custom code | |

| CTRL_OF_COMPLIANCE | Controller | Check whether the vendor-supplied controller is capable of speaking OpenFlow and if other SDN protocols are used | None | The controller supports talking with the datapath using OpenFlow, enabling interoperability scenarios | |

| CTRL_TOPOLOGY | Controller | Investigate the technologies used by the vendor-supplied controller to reconstruct the topology (LLDP?) | None | The controller adopts standard LLDP-based topology reconstruction | |

| DP_MPLS_MATCH | Scenario | Check the ability of the datapath to match on a non-outermost label | |||

| DP_MULTICTRL_BEHAVIOR | Functionality | Check if multiple controllers can be defined on one datapath and which modes are supported: failover, load balancing, etc. | None | ||

| DP_CLI_OF | Functionality | Check whether flow rules can be added from the CLI | None | ||

| DP_FLOW_INSERTION_TIME | Performance | Measure the time needed for the insertion by the controller of thousands of flow rules | None | ||

| DP_IPV6 | Functionality | check whether IPv6 matches and actions are supported | None | ||

| DP_CPU_LOAD | Performance | monitor CPU load when inserting flow rules and when switching traffic | None | ||

| DP_TRUE_CLONE | Functionality | If different instances of the same switch/vendor behave the same | At least 2 switches of the same model | ||

| MAX_DPID | Functionality | Maximum number of dpids that can be defined | None | The DPID is a 64-bit field (lower 48 bits used for the MAC, upper 16 are vendor-defined | |

| INTER_DP | Functionality | If packets can be switched between datapaths | MAX_DPID test outcome is at least 2 dpids | ||

| CTRL_VPN | Functionality | Check the operation of a scenario in which an SDN VPN controller coordinates MPLS-based VPN services | MPLS VPN topology | SDN VPNs are correctly configured and traffic can be forwarded as expected |

Devices Under test

We considered 7 hardware datapaths manufactured by 7 major vendors. Due to NDA constraints, we are unable to declare the model of the tested switches and the name of the involved vendors, therefore in the following we address them as S1, S2, . . . , S7. All the datapaths were equipped with a forwarding ASIC. Some of them ran a customized version of Open vSwitch (OVS) under the hood, associated with drivers for hardware accelerated forwarding, whereas others had a proprietary OpenFlow implementation. The main features of the datapaths we considered are specified in table. As a term of comparison, we also executed our tests (except performance tests) on OVS, which is known to have a very good level of compliance with the OpenFlow specification.

Main Features of the tested datapaths

| ID | 10GbE Ports | Switching Fabric Capacity | CAM | OpenFlow version | OVS-based |

|---|---|---|---|---|---|

| S1 | 8 | about 2 Tbps | CAM | 1.3 | No |

| S2 | 128 | about 2 Tbps | TCAM | 1.3 | No |

| S3 | 64 | about 1 Tbps | n/a | 1.3, 1.4 | Yes |

| S4 | 4 | About 500 Gbps | TCAM | 1.3 | No |

| S5 | 4 | About 500 Gbps | TCAM | 1.3 | No |

| S6 | 72 | about 1 Tbps | n/a | 1.3 | No |

| S7 | 40 | About 500 Gbps | n/a | 1.3 | Yes |

| OVS | n/a | n/a (software switch) | No | 1.x | Yes |

Results

Ryu Test

The figure shows the count of test cases that Ryu reported as passed for each considered datapath, distinguishing between tests that verify mandatory features in the OpenFlow specification (e.g., support for matched fields, actions, etc.) and those that verify optional features. The dashed horizontal line is a threshold that corresponds to the total count of test cases (276) for mandatory features comprised in the Ryu test suite.

The figure shows, for each class, the percentage of tests passed by each datapath with respect to the total number of tests in that class. In accordance with the format of Ryu test reports, we moved in a separate class set-field those test cases that apply modifications to existing packet headers (e.g., rewrite L2/L3 addresses).

We also classified the test cases according to the protocol headers used in the test packets sent to the target datapath, generating the following plot

Custom Tests

Figure shows the outcome of test ct flow insert, namely the time it took to install increasingly large sets of flow entries on the target datapath starting from an empty flow table.

Publications

- Roberto di Lallo, Mirko Gradillo, Gabriele Lospoto, Claudio Pisa, Massimo Rimondini. "On the Practical Applicability of SDN Research". In, Melike Erol-Kantarci, Brendan Jennings, Helmut Reiser, editors, Proc. IEEE/IFIP Network Operations and Management Symposium (NOMS 2016), 2016. Accepted for publication.